A Thousand Splendid Refactorings

Refactoring legacy systems is messy, slow, and often avoided—but AI is starting to change that. This case study shows how that combination can turn a multi-year effort into something far more scalable and efficient.

It's well said that change is the only constant in life. It doesn't matter whether we're talking in context of Greek philosophy or software engineering, although, the interesting point these days is how AI coding tools are reshaping this constant for the latter.

Legacy systems are rigid and hard to change. Working with them takes more patience and care; there could be a binding here which would make no sense for its existence but removing it might break something on the far end of the system. Monolith legacy systems take this ever further with complex bindings and difficult refactoring changes.

What follows is a small case study on how we used relevant AI coding tools to refactor one of our monoliths (more like a megalith actually, my fellow brethren working on this repo would agree with me I think) to implement a decade long debt which was also an indirect security flaw.

Headout has aged like wine, sadly, a part of our codebase hasn't. It has a forever expanding list of technical debts including a debate on one of the fore-mentioned monolith's existence itself. Technical debts are a difficult entity to get rid off. It has no visible gain, making them a hard case to be argued in front of stakeholders. And overtime, with enough debt accumulated it hampers the development experience, thus dropping the direct throughput of everyone working in and around it. What we might save on not picking up the debt now, we will inevitably have to pay in the future by navigating a debt ridden codebase.

AI coding tools, conversely, are incredible in building newer things but they kind of break apart when asked to refactor a significant amount of legacy stuff. At times they try rewriting the world (YOLO!) by changing everything they touch upon, at times they break legacy stuff by modifying entities that shouldn't be modified. It's like the wonderful xkcd cartoon on open source. You see that small pillar, that's exactly what AI today would think of refactoring and "enhancing".

But here's the interesting thing, garnish the raw random refactorings that AI tools would pick with some human ingenuity and voila! the results are very very satisfying.

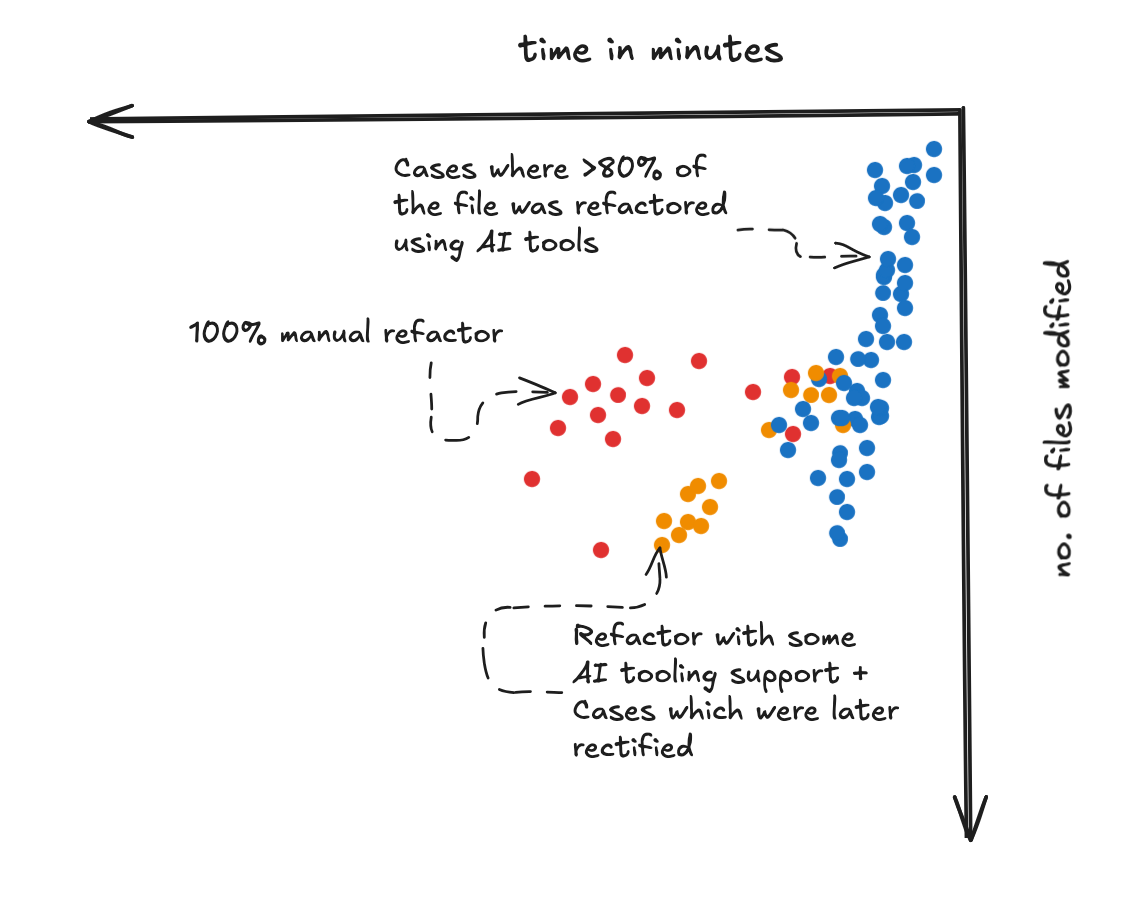

For reference, the refactor which I talked about earlier, it was done in phases over 3 years. Again, a small reminder that mega-refactors on enterprise legacy systems is a mammoth task. Now, if I were to plot a graph of files refactored for this task to the time taken to wrap them up, the results get very gratifying.

The points were plotted using past references and git commit/change tracking. A human (me in this case) would rather commit a "bunch" of files where I have wrapped up my changes for a particular component I was refactoring. The default AI tools (without any markdown engineering) would rather refactor and push files at smaller frequent batches. This of course can change between humans and tools alike. Why is this graph gratifying? Because, as Carl Sagan wrote about it "never underestimate an exponential". The exponential here is the rate at which we can refactor a legacy system over time, as with this experiment, it eventually got better at refactoring and applying the ingenuity I applied during the "orange" mix colored cases. This is a big win! Especially when the task at hand is a banal, "no-one-wants-to-do-it", boring change.

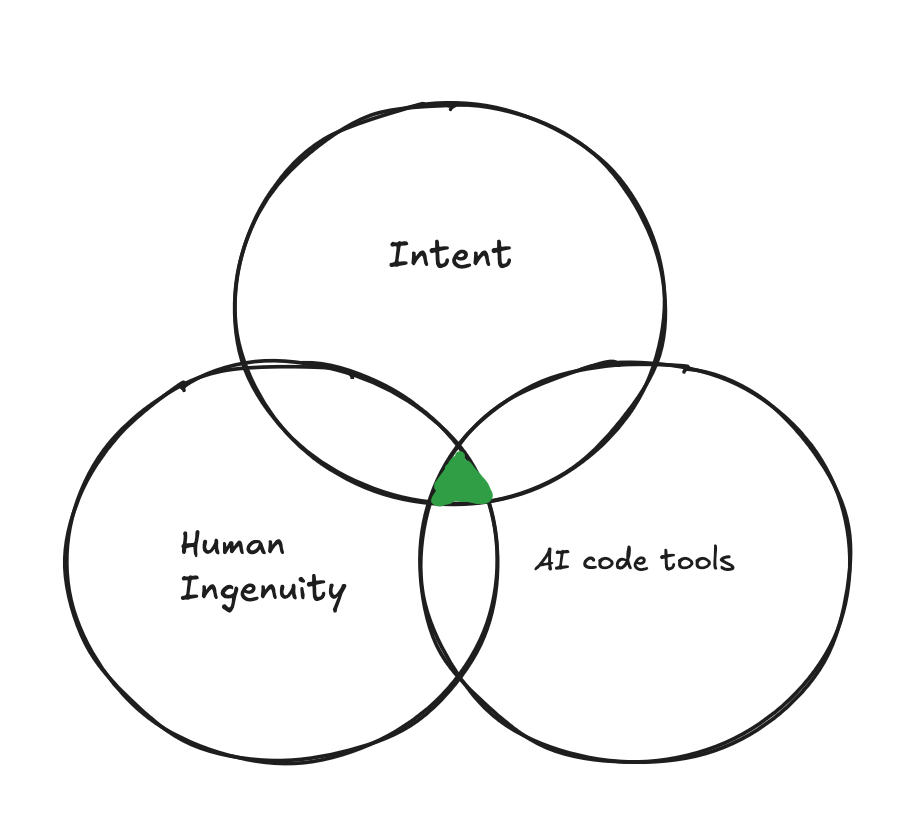

Human ingenuity then becomes the missing piece of this refactoring puzzle.

If I am allowed to shamelessly butcher the wonderful triangle of fulfillment by Kailash Nadh, to be retrofitted to my case study and be rechristened as the "Triangle of AI-driven fulfillment" it would look something like this:

Of course this fits into the original triangle of fulfillment since this is nothing but a derivation of it, the overarching idea still remains the same. To truly achieve splendid refactoring results in this increasingly AI driven world, we need not one, not two, but all three vertices of the triangle of AI-driven fulfillment and it is through the perfect union and balance of all of them shall we truly increase the real throughput throttling software engineering, the rate at which we handle our tech debt.